On May 24th, a great meetup was held about Observability and Security. Security is a topic I want (and need) to get up to speed with, so this was a good opportunity to do so. As part of the observability community, we owe our gratitude to teams and companies like Data Driven Network Operations and Assurance at T-Mobile, as they contribute greatly to this community. Therefore, let us express our heartfelt appreciation to Erwin Halmans and his team.

Takeaways

Difference in observability use case

Over the past 25 years, I have worked on monitoring and observability and this has involved a diverse range of projects, each with its unique use case. Regardless of whether I was monitoring long haul SDH and DWDM Fibre Optic Networks or mobile networks, my focus has always been on identifying different metrics, logs, or other indicators that would show service degradation or customer impact.

During the meetup, Data Driven Network Operations and Assurance also presented an architectural overview of service components, combined with a business viewpoint. Jan Stegink’s insightful presentation provided valuable information on the origins of data streams.

Use logs to your benefit

T-Mobile uses logs from various sources in their observability use case. Based on this data, they have created multiple dashboards and alerts. These alerts are sent to the NOC for immediate action if necessary and to review the dashboards. The ultimate goal is to use data and observability to resolve issues proactively, eliminating the need to send alerts to the NOC and preventing any negative impact on the business.

The Data Driven Network team receives logs from various data sources. Considering the volume and diversity of data, they use ‘Vector’ as a lightweight data shipper. Internally, Vector uses Vector Remap Language (VRL) which is an expression-oriented language designed for transforming observability data (logs and metrics) in a safe and performant manner. It features a simple syntax and a rich set of built-in functions tailored specifically to observability use cases.

Furthermore, for handling metrics, they utilize Telegraf, which leverages model-driven telemetry. Telegraf, written in Go, compiles into a single binary with no external dependencies, ensuring a minimal memory footprint. Lastly, they make use of Kafka Connect to distribute data to Elastic Cloud.

Security

The second presentation was given by Remco Sprooten, a member of the Elastic Security Labs team. He provided a demo on ‘Hunting for Persistence: real-life examples of threat hunting in action.’ It was really cool to witness actual examples of hacking and gain insights into the mindset of hackers, highlighting how simple it can be in some cases.

Usually, traces of hacking are identifiable in the logs, which can be used again in Elastic Security to enhance visibility. However, there are instances when the hacker leaves no traces at all. In such cases, as Remco explained, there are still some ways to identify if undesired activity has taken place. By looking at the right information that is available and leveraging the MITRE ATT&CK framework, it is possible to uncover signs of illegal activities.

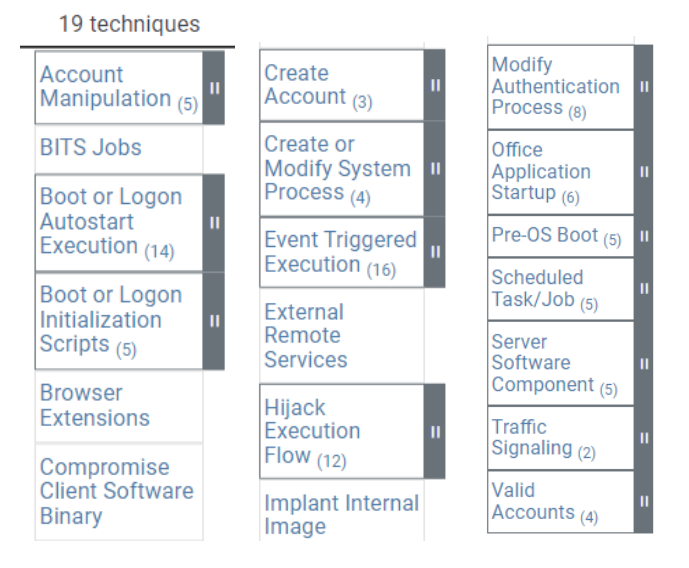

The focus of this session revolved around some of the common ‘persistence’ techniques that are documented in the MITRE ATT&CK framework. These techniques are used by adversaries to maintain system access even in the face of restarts, changed credentials, and other disruptions that could potentially sever their access.

Training

Elastic has rolled out new security training content and is available in standard training subscriptions.

New Elastic Network Security Analyst learning path

What is the Network Analyst path?

The learning path is a series of seven individual training modules. Each module includes ~8 hours of on-demand, lab-based content and teaches the skills necessary to perform full-spectrum threat detection using the Elastic Stack and various open source tools.

Who is the path designed for?

Security professionals (i.e. Security Analysts & Engineers, Cyber Operators, etc) who are responsible for analyzing network data and identifying malicious actors.

What modules are included in the path?

- Linux Fundamentals: Basic operational skills for the Linux command line

- Network Protocol Analysis: Network protocols including DHCP, ARP, ICMP, LDAP, Kerberos, SMTP, and SSH

- Packet Analysis: Networking concepts and packet tools including Wireshark, Stenographer, and Docket

- Intrusion Detection System (IDS) log analysis with Suricata: Configure Suricata to automate detections of malicious network traffic

- Network Metadata log analysis with Zeek: Install and configure Zeek to improve network security monitoring

- Kibana for Security Analysts: Correlate different data sources and analyze what’s happening in your network

- Threat Hunting Capstone with Network Telemetry: Unguided hunt focused on analyzing network data and identifying malicious actors.

So, based on Remco’s talk and my interest in security, these trainings are first on my list.

Happy hunting!