Automation

Within Automation we see the following ways to control your costs:

Automate by standardization

- Automate by standardization

- Efficiency with pipelines

- Meeting demands

- Optimize by sizing right

With the move to the cloud, many IT teams have adopted agile development methods. The IT teams needed to repeatedly deploy their solutions to the cloud, iterate quickly and know their infrastructure is in a reliable state. Teams need to manage infrastructure and application code through a unified process.

To meet these challenges, the IT team can automate deployments and use the practice of infrastructure-as-a-code. In code, you define the infrastructure that needs to be deployed. The infrastructure code becomes part of your project. Just like application code, you store the infrastructure code in a source repository and version it. Anyone on your team can run the code and deploy similar environments.

To implement the infrastructure-as-a-code in the cloud, you can manually click through all the various needed resources and options, or you can use pre-populated or self-made templates. The templates are standardized and created for services or application architectures for the

use of quick and reliable provisioning.

All major cloud providers offer their own form of an infrastructure-as-a-code solution, typically by way of JSON or YAML-based templating solutions. These markup-based configuration files are often uploaded to a hosted service in the target cloud, where a hosted service will then process the files to create, update, or delete resources as necessary.

Efficiency with pipelines

A pipeline is a process that drives software development through a path of building, testing, and deploying code also known as Continuous Integration and Continuous Development (CI/CD). Tools that are included in the pipeline could include compiling code, unit tests, code analysis, security, and binaries creation. For containerized environments, this pipeline would also include packaging the code into a container image to be deployed across a hybrid cloud.

The objective is to minimize human error and maintain a consistent process for how software is released and gain efficiency. With pipelines, organizations allow IT organizations to reliably and efficiently build and deploy their applications of IT systems to their production environments. With the use of pipelines, the cloud-based delivery model offers speed and agility to the business.

Meeting demands

To meet demands, organizations subscribe to services to be available at their peak. But no environment is utilized at its fullest 100% of the time. So most of the time the hardware and software are idling and so is your money. To still meet the demands but also reduce costs, autoscaling can help.

Autoscaling can be done vertically and horizontally. Vertically means that you scale up (or down) the capacity of a resource. Horizontally means that you scale out (or in) instances of a resource. For this, you have to understand (and forecast) your demand, perform tests to see if it is

possible and implement a continuous capacity management process.

If done correctly, autoscaling allows for better fault tolerance, better availability, and better cost management, quickly scaling up and down to meet traffic demands while keeping your costs within budget by detecting and replacing the components that are not healthy enough to

be present in the infrastructure.

Optimize by sizing right

Sizing the right resources is based on your actual usage data, but how much of your data should you look at? It is a process of defining the optimal cloud infrastructure best suited for the current and near-term needs of workloads in a way that balances risk and cost to minimize

waste.

Cloud resources are elastic, scalable, and provisioned on-demand and if these resources are sized right, costs are controlled and saved. Sizing your environment correctly, helps organizations to control (and lower) your costs and make changes to your infrastructure to match the workload as needed.

Optimization by sizing right means that with insights on usage the IT organization is able to modify, upgrade or downgrade, existing cloud infrastructure to balance and meet the exact demand of the business. The public cloud allows you to rightsize a wide range of services before they incur the total monthly costs.

Conclusion on Automation

With automation as the fundamental building block for cloud computing, a very important part in controlling cloud IT costs is to be made. Automation can make cloud computing fast, efficient, and as hands-off as possible, and will have your IT employees do what they are hired to do.

When using standardized templates, and having a good process to rightsize the needed resources, the first steps are made toward automation. Autoscaling further allows scaling up and down – both vertical and horizontal – of needed resources. These subsolutions – within automation help your IT administrators to ensure that performance is optimal.

Clean-up

Within Clean-up we see the following ways to control your costs:

- Efficient use

- Shut down unused resources

- Spot instances

Efficient use

Race cars and sports cars are very different as they were made for two different purposes. Sports cars are used for everyday driving and are street legal. The race cars are not street legal and are built for a very specific reason (to go around tracks as fast as possible). This is similar to your on-premise Data Center compared to a cloud Data Center.

To use a cloud Data Center better or to its fullest potential, using it efficiently is a way to control Costs. Efficiency can be done by checking if your current resources in the cloud are used efficiently and combining resources to lower your costs.

Shut down unused resources

Cloud Clean-up can be done in the number of vendors (from many to one or two), but also in the number of cloud instances, which are already running in your environment. Through automation, organizations can simplify processes and save time-consuming manual operations.

The easiest way to optimize cloud costs is to look for unused or unattached resources. Shutting down unused instances, especially during weekends and at the end of the workday, is the first step in optimizing costs. The recommended actions are to shut down or resize, specific to

the resource being evaluated.

Spot instances

Cloud vendors like AWS, Google, and Microsoft have services where you can request unused instances to reduce compute costs and improve application throughput.

Spot instances enable an organization to deploy a broader variety of workloads while enjoying access to discounted pricing compared to pay as- you-go rates. Spot VMs offer the same characteristics as a pay-as-you-go virtual machine, the differences being pricing and evictions. Spot VMs can be evicted at any time if the cloud vendor needs the capacity.

Workloads that are ideally suited to run on Spot VMs include but are not necessarily limited to the following:

- Batch jobs

- Workloads that can sustain or recover from interruptions

- Development and test

- Stateless applications that can use Spot VMs to scale out, opportunistically saving cost

- Short-lived jobs that can easily be run again if the VM is evicted

- Spot instances are an excellent way to reduce cloud costs, but they can be complex to manage

Conclusion on Clean-up

Everybody loves a good clean-up, whether it is your desk, your house, or the cloud environment. A good and thorough clean-up works productively and, when done regularly, cuts down costs.

Efficient usage and shutting down of unused resources sound simple and logical, but it is mostly overlooked or simply not done. Using Spot instances looks appealing from the cost aspect, but there is an important caveat; reliability is not guaranteed. The cloud provider can interrupt these instances at short notice to reclaim capacity. However, with careful management, spot instances can be useful for batch processing and high-performance computing (HPC) clusters, web server clusters, and many other workloads.

Observability

Within Observability we see the following ways to control your costs:

- Faster & predictive IT Operations

- Monitoring

- Visibility

Faster & predictive IT Operations

Being able to cut through IT operations noise and correlating operations data from multiple IT environments, means that an IT operations team needs to be able to identify root causes and propose solutions faster and more accurately than humanly possible.

Having the ability to achieve a faster mean time to resolutions, and having the use of Artificial Intelligence (AI) can help to achieve what is humanly impossible. Artificial Intelligence for IT Operations (AIOps) uses artificial intelligence to simplify IT operations management and accelerate and automate problem resolution in complex modern IT environments.

Because AI never stops learning, AIOps keep getting better at identifying less-urgent alerts or signals that correlate with more-urgent situations. This means it can provide predictive alerts that let IT teams address potential problems before they lead to slow-downs or outages.

Instead of being bombarded with every alert from every environment, AIOps operations teams only receive alerts that meet specific service level thresholds or parameters. The more AIOps learn and automates, the more it helps ‘keep the lights on’ with less human effort, and the more your IT operations team can focus on tasks with greater strategic value to the business.

Monitoring

Monitoring has been around for decades as a way for IT operations to gain insight into the availability and performance of its systems. To meet the growing market demands and drive more revenue, IT organizations require a deeper and more precise understanding of what is happening across their IT environments. This is not easy as today’s infrastructure and applications span multiple environments and are more dynamic, distributed, and modular in nature.

Monitoring relies on building dashboards and alerting to escalate known problem scenarios when they occur. With monitoring, a problem can be seen quickly, and therefore action can be taken faster. The sooner the problem is identified and solved, the less money is spent.

Visibility

Visibility in the cloud means eliminating the blind spots that can lead to overspends, performance inefficiencies, and security issues. If your business loses visibility in the cloud, it can result in a loss of control over IT management and data security.

An example of how a business loses control when it loses visibility is when individual departments set up their own Line of Business IT environments (also known as shadow IT). These departments can launch unapproved applications – sometimes duplicating the cost and capabilities of existing approved applications – and generate data that may go into the cloud and never come back.

Besides having unmanaged applications with the data – which can cause compliance issues and a potential lack of data – shadow IT environments aren’t included in any disaster recovery and or business continuity plans.

So how can you pay attention to something you cannot see? This is part of the challenge that cloud costs are getting out of control because few people notice it. That doesn’t mean the IT organization does not care. Most engineers, if shown, will actually try to fix it themselves. It’s called the Prius effect. In cloud computing this is similar. Making visible what is going on makes people aware of what their cloud usage and spendings are and (hopefully) improves their behavior.

Conclusion on Observability

Modern multi-cloud environments with extensive collections of distributed apps are increasingly complex and dynamic, rendering older monitoring and managing approaches ineffective and almost obsolete. The challenge within observability lies in the fact that:

- Cloud environments are (very) complex

- Monitoring tools are siloed and not adequate to monitor the cloud fully

- There is a high frequency of updates and real-time visibility

Observability provides informed business decisions due to deeper and better insights, more efficient IT, and a reduction of deployment time for new services. If you can’t monitor your servers, containers, and data in the cloud, you can’t analyze and fix problems at the speed you need. Given the complexity of cloud infrastructure and the amount of data processed, observability is more important than ever.

By observing the status of your cloud environment, you are making visible what is going on. By monitoring via dashboards and alerts, eliminating the blind spots that lead to overspends, performance inefficiencies, and security issues. Making visible what is going on, what costs are, and what the impact on the business is, makes people aware of what their cloud usage and spendings are, and enables improvement.

The overarching benefit of AIOps is that it enables IT operations to identify, address, and resolve slow-downs and outages faster than they can by sifting manually through alerts from multiple IT operations tools. In the future, AIOps can provide the overarching benefit that it enables

IT operations to identify, address, and resolve slow-downs and outages faster.

Cloud-native architecture

Within Cloud-native architecture we see the following ways to control your costs:

- Faster time to market

- High level of self-service

- Managed services

- Serverless architecture

Faster time to market

Speed and quality of service are important requirements in a rapidly growing and evolving IT world. Automation makes it possible to ensure that the systems are performing optimally and that all requests regarding deployment and allocation are fulfilled quickly and efficiently.

The DevOps approach aims to shorten your Software Development Life Cycle, enabling organizations to gain a faster time to market with their products and focus on a customer-centric approach. Consequently, updates and subsequent releases become faster and better as well.

The reduced development time, overproduction, overengineering and technical debt can lower the overall development costs as well. Similarly, improved productivity results in increased revenues as well.

High level of self-service

Within cloud architecture, self-service is a key attribute. Whether you deploy apps on an elastic, virtual or shared environment, your apps are automatically realigned to suit the underlying infrastructure, scaling up and down to suit changing workloads. It means you don’t have to

seek and get permission from the server, load balancer or a central management system to create, test or deploy IT resources. While this waiting time is reduced, IT management is simplified.

Managed cloud services

Right from migration and configuration to management and maintenance while optimizing time and costs to the core, cloud architecture allows organizations to fully leverage cloud-managed services in order to efficiently manage the infrastructure. Since each service is treated as an independent lifecycle, managing it as an agile DevOps process is simplified.

Think of cloud services where serverless compute engines enable organizations to build applications without the need to manage servers via a pay-per-usage model. With the help of these services, IT organizations can easily set up and manage a cloud development environment with minimal costs and effort.

Serverless architecture

The term “Serverless” might be confusing. There will always be server hardware and processes running somewhere, but the difference compared to normal architecture is that organizations who are building and supporting a ‘Serverless’ architecture are not looking after that

hardware or those processes. They are outsourcing this responsibility to someone else.

Serverless means that scaling is automatically managed, transparent, and fine-grained, and this is tied in with the automatic resource provisioning and allocation. This all goes in milliseconds. Container platforms still need your IT department to manage the size and shape of these clusters and this will take more than a few milliseconds.

The multiple benefits of Serverless architecture are:

- Reduced operational costs -> Serverless is an outsourcing option. As an organization, you need fewer personnel to manage your IT environment, since this is done by another organization.

- Reduced development costs -> Having a serverless architecture means that there are common components being used, meaning none to little modification is needed for multiple applications if a component like e.g. authentication is changed or modified.

- Scaling costs -> Scaling in a serverless architecture is done automatically, elastically, and managed by your provider. This means that you only pay for what you use. And depending on your traffic scale and shape, this can be a huge reduction in your cloud IT costs.

Conclusion on Cloud-native architecture

Cloud-native architecture is not optional, but it is mandatory on your IT cloud roadmap. Since change is constant in the cloud, it means that your organization’s environment should be flexible enough to adapt to new technologies and methodologies fast enough, without disturbing

business operations.

By using a cloud-native architecture approach, your organization will have a faster time to market for your applications and less operational costs due to a higher level of self-service and managed services.

Governance

Within Governance we see the following ways to control your costs:

- Control the process

- Cut costs by automation

- Look at cloud governance

- Tags

Control the process

Cloud governance is an iterative process with multiple processes. For organizations with existing policies that govern on-premise IT environments, cloud governance should complement these policies. The level of corporate policy integration between on-premises and the cloud,

varies depending on cloud governance maturity and the nature of the digital estate in the cloud.

As the cloud estate changes over time, so do cloud governance processes and policies. Governance frameworks, such as NIST, are useful starting points to help guide your organization’s governance practices.

Controlling the processes within the organization increases productivity and decreases the throughput of waiting time.

Cut costs by automation

Automation is essential to governance. Cloud environments are dynamic and ca n scale to large numbers of resources, components, and services. Take advantage of cloud service features that support governance by looking at the ways to cut costs within automation as explained before.

Look at cloud governance

Cloud governance are the rules under which a company operates in the cloud. The rules can relate to how much a department can spend in the cloud, what the cloud can be used for, and what security precautions need to be taken to reduce cloud risks. In some ways, cloud governance is the same as having rules for how an on-premises IT environment operates

Check for Governance of law, but also check for internal standards and governance. Although the public cloud might be allowed, it may be against company rules to have all in the public cloud.

Tags

Tagging is an important part of any organization’s cloud governance strategy. You might have environments, such as Test or Production, that use resources across multiple subscriptions owned by different teams. To better understand the full cost of the workloads, tag the resources that they use. When tags are applied properly, you can apply them as a filter in cost analysis to better understand trends.

The key to a successful tagging strategy, that can achieve benefits, is defining tagging policies extremely clearly. There could be a consistent set of tags specifically for governance purposes that apply globally. Servers, storage volumes, databases, and load balancers could all be tagged with the name of the provisioning user, as well as the team or department that they belong to. Some organizations may also implement a project or application-based tags, depending on their specific demands.

Tags must be applied consistently by all applications and teams. Without a clear, easy-to-understand tagging policy, individuals are bound to use variations of the same tag, complicating and damaging the accuracy of reporting efforts.

Conclusion on Governance

The impact of cloud and all its possibilities has a tremendous impact on IT governance. IT governance needs to be implemented within every industry and shows challenges in adaptation and reorganization of the business itself and all its connected processes.

A major challenge of cloud governance is the breadth of topics to address. It is more practical to introduce a comprehensive governance framework incrementally, rather than in a single step. Start with the highest priority items for your organization. If your cloud spending is excessive and unsustainable, focus on cost management early in the process.

Cost reduction on Governance lies not in the set-up of new processes, having automation or tags, but mainly in following a common framework, GDPR, EU, and or local law, high fines will be prevented.

(+1) License (and cost) optimization

In the age of cloud technology, the adoption of as-a-service models has rapidly increased. However, for many organizations, this has led to an adverse outcome of license costs ballooning out of control. There are thousands of cloud services, dozens of cloud service providers, and

numerous Infrastructure-as-a-Service (IaaS) providers offering pricing models – each one frequently changing and upgrading their portfolios.

Knowing the best possible software licensing contract and cloud subscription to meet your needs is important. Since Devoteam has no license expertise, we talked to Quexcel, who are experts in this line of work and helped us identify the best ways to control your cloud IT costs

with regards to license optimization.

Within License optimization, we see the following ways to control your costs as a recommended bonus and you will be able to reach out to the market for a supplier for your Software License or Cloud Agreement:

- Monthly billing exercise

- Smart choices

- Contract optimization

Monthly billing exercise

With cloud providers, you pay for multiple line items. Licensing, services, hardware, networking, power, storage, management et cetera. One can expect that these costs are the same every month, yet this is not the case. There are variables due to network usage or storage which is

added or deleted. When we talk about IaaS, PaaS et cetera, this is often not top of mind. On the seat-based side, you should look if you have the correct subscription and if you have unused (parts of the) subscriptions. E.g., all users have Office 365 E3, but only 90% use the actually possible downloadable services, or users haven’t used an online service (e.g., Project Pro or Visio) over 90 days, but you still pay for the subscription on a monthly basis.

Smart choices

Choosing an appropriate cost model requires many factors to be considered. All compared cloud providers offer straightforward pricing but also offer flexibility features for ad-hoc utilization requirements to meet business needs. For this Outlook, we looked at Microsoft, AWS and

Google.

Pay-as-You-Go provides the quickest way to buy online services but caveats in the compute and storage provision make this route suited better for proof of concept and small ‘quick win’ deployments. Allocation of resources is offered to customers in different methods as well, some

providers offer a pre-defined pool of resources whereas others work from a catalog of virtual machines.

Reserved Instances provide organizations pay less when committing for a longer term. Most cloud providers divide their pricing models into three categories:

On-demand instances -> let you pay for computing power per hour or per second, without long-term commitments or upfront payments. You can increase or decrease the resources available to your applications at will.

Reserved instances -> for workloads that run on the cloud in the long term, you can commit to compute instances for a period of between one to three years, with the option of paying some of the amount upfront.

Spot instances -> spot instances let you bid on unused capacity in the cloud provider’s data center. They provide the highest level of discount, up to 90 percent compared to on-demand costs.

There are several options to optimize costs and to look at payment options. Having a thorough thought about this prevents a (monthly) bill shock.

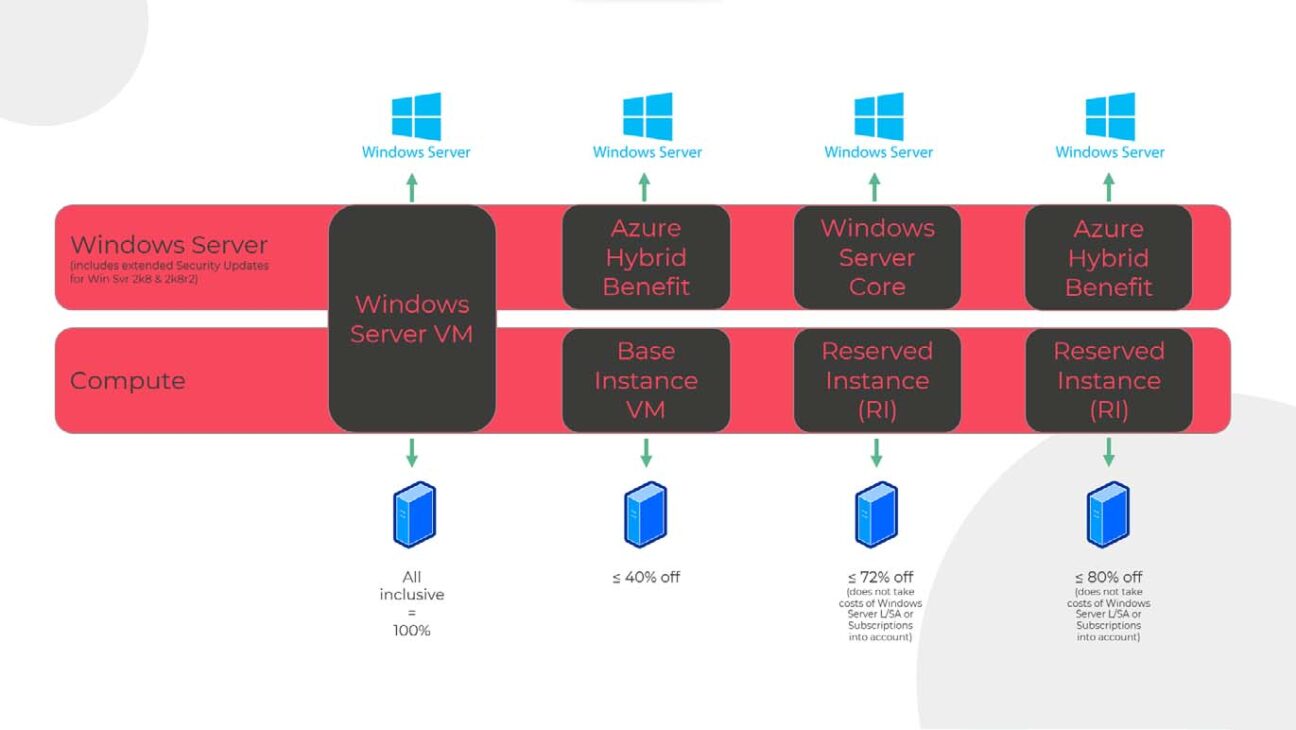

- Pay-as-you-go for all

- Pay-as-you-go (for computing) and bring your own license (for the software)

- Reserve Instances (for computing) and pay-as-you-go (for the software)

- Reserve Instances (for computing) and bring your own license (for the software)

Contract optimization

Optimization of your contract is something you can do yourself, but if the reseller that sells you the software is doing it for you, it might feel like ‘a fox guarding the henhouse’. Letting an independent contractor check if the contract fits best to your situation or if you have subscribed too little (or too much), is always wise.

Conclusion on License optimization

Discounts are an option from some providers for volume purchasing or utilization but typically don’t apply until large commitments have been made. Billing methods can be from as little as utilization per hour or per month so forecasting utilization can play an important part when

understanding which provider offers the better option.

Not all options are possible within the contracts available, so be smart before you start and think about answering questions like:

- How much do I want (or am I allowed) to pay?

- How many times do you want to pay?

- Do I want this via a Credit Card or via an invoice?

- Which legal terms and conditions (which can differ per agreement) am I willing to approve?

- In which country do I sign the agreement (and am I willing to agree on the legal impact)?