The words container and containerisation seems like something that appeared out of nowhere within the IT industry in the past couple of years. However, to most people’s surprise, the idea of containers actually came about in the 1970’s.

The initial idea was to create a Sandbox-like environment, where the container workloads are isolated from the production environment without risking any disruption to other running services on the host machines, this enabled developers to test their application code and processes without worrying about other aspects of the host system.

During early adoption of containers, there were many drawbacks, one being the fact that containers were not portable in comparison to recent container technologies. However, over the years, container technologies have exponentially improved, with containers now having the ability to isolate users, files and networking, for example, containers are now assigned their own IP address.

Container technology of recent times.

Over the years, more and more container deployment options and management tools have been introduced into the market, for example, public cloud services such as Amazon Web Services (AWS) has provided a multitude of services that help users provision and manage containers, with one particular AWS Managed service being AWS Elastic Container Service (ECS), a managed Docker service and the other being Elastic Kubernetes Service (EKS), a managed Kubernetes service.

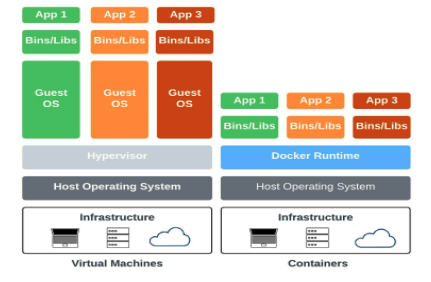

Since the 1970’s, you can see that the way of containers has gone through significant transformation where one game changing aspect has been the introduction of operating system (OS) level virtualisation technology. This allows the host system to provide multiple isolated environments on a single host machine. This means containers can run and be provisioned with greater speed without the need to install dedicated guest OS as the host OS kernel is shared with the containers.

The benefit of sharing the host OS kernel allows containers to use features such as Namespaces, SELinux profiles, chroot and CGroups. This helps to create an isolated environment for the running containers and provides the added flexibility to run different distributions from their host OS, as long as both OS’s run on the same CPU architecture.

The changes to containers over the past 50 years has seen increased improvement in container usage; however, a key change has been the management of containers, portability, scaling and the compatibility with other host OS systems such as Windows.

With the historical knowledge of how containers came about and how they have evolved over recent times, you may still ask what exactly is a container?

To summarise, containers are essentially lightweight software components that “compresses” the application, dependencies, and configuration into a single deployable image.

How did Docker change the game?

When talking about containers or containerisation, Docker is always the first word that comes to mind. The introduction of Docker was the catalyst that pushed companies to start shifting to use containers with real conviction.

When Docker first came about, they were a Platform as a Service (PaaS) provider namely `dotCloud`. With the evolution of container technology, dotCloud’s initial offering of PaaS benefited from this improvement so much, they decided to open-source its container technology and renamed it to the familiar term Docker.

Once Docker became open-sourced, the popularity and use of Docker rose exponentially with management platforms such as Kubernetes, Docker Swarm, Mesophere and even AWS ECS / EKS. Although each platform may have its own opinion on their best practices they all have a common goal – to make containers more accessible to developers.

Containerisation – Why bother?

To adopt containers into any firm and company of any size, one must understand that it requires much more than orchestration and management tools. More often than not, terms like Docker and Kubernetes have become more of a buzz-word in recent times and organisations do not necessarily know the reason behind why they want to adopt such technology.

At Devoteam we were faced with a client who wanted to containerize their application but was unsure on whether it was the best or correct approach. With further investigation into the reasoning behind the clients problem, we found that the client was having issues with launching their application into production due to its long time-to-market.

Based on our findings, our highly experienced Devoteam consultants provided a solution that will use Docker containers and AWS Managed Services along with a CI/CD pipeline to build out a solution – the building and pushing of the container images into a AWS Elastic Container Registry (ECR), running a health check on the container and lastly deploying the container onto an AWS ECS instance.

With this solution we were able to significantly reduce the client’s time-to-market from months into days as this solution allowed their developers to work seamlessly to containerize their application through the pipeline and share the container images with other developers.

The developers were able to carry out code development and testing, which, as a result, improved collaboration within the organisation and increased the speed in which code was developed and tested.

This helped decrease the time it took to get the application running in production.

| Containerisation Positives | Containerisation Negatives |

| Containers provide a lightweight, fast, and isolated infrastructure approach to run your applications. Due to the flexible nature of containers, they can be backed up and restored faster. | Containerisation is a great match for Linux OS. However, while Windows has a container environment, it is not supported as much as the Linux environments. |

| The application, dependencies, libraries, binaries, and configuration files are “baked” into the container image, providing an easy solution to migrating and running your application anywhere. | Containers share the kernel of the OS, so if the kernel becomes vulnerable, all of the containers will also become vulnerable. |

| The average container size is small in comparison to a virtual machine. | Networking can be tricky. You have to maintain a good network connection while actively trying to keep containers isolated. |

| The isolation of applications as containers prevents the invasion of malicious code from affecting other containers or the host system. | Containerisation is usually used to build multi-layered infrastructure, with one application in one container. This means you have to monitor more things than you would if running all of your applications on one virtual machine. |

| Containers can help decrease your operating and development cost. | Monitoring your containers is a harder task than it would be on a virtual machine. |

With the above scenario in mind, I cannot stress enough the importance for companies and organisations to understand what containers actually do and how their problems can be resolved through the use of this technology.

Therefore, having some high-level knowledge on the pros and cons of containerisation can be beneficial but most importantly, our consultants at Devoteam can provide the much needed support and solution necessary to help your company, no matter the size or location, to achieve its short and long term goals!

If you’re looking for help with your container strategy, Devoteam works with your team on ‘why containers’; how to build a strategy and plan, how to assess your workloads for container suitability and build a backlog of change whilst you build the container platform. We work with your teams to upskill them and leave you in a position to design and build and run container workloads over the long term. If you don’t want to run your own container platform, we at Devoteam are also happy to offer Serverless or Server-Level Controlled container platform solutions via AWS services such as; serverless ECS Fargate, EC2 or EKS (Kubernetes).

However, if you require a hybrid container platform solution that can operate on your on-premise infrastructure, we can also help you provide a solution via AWS EKS Anywhere.

Senior DevOps Consultant